The three frameworks at a glance

All three frameworks share the same core idea: replace gut feel with a number that reflects both value and cost. But they define "value" and "cost" differently, and that difference determines which one fits your context.

- R Reach — users/quarter

- I Impact — 0.25×–3×

- C Confidence — 10–100%

- E Effort — person-months

- BV Business Value

- TC Time Criticality

- RR Risk Reduction

- JS Job Size (Fibonacci)

- I Impact — 1–10

- C Confidence — 1–10

- E Ease — 1–10

- Range: 1–1000

All three run natively in Jira. Priority Scoring for Jira adds RICE, WSJF, and ICE as separate custom fields — you can have all three on the same issue and switch between frameworks in the dashboard with one click.

RICE scoring — user value per unit of effort

RICE was developed by Sean McBride at Intercom to solve a specific problem: growing product teams couldn't agree on feature priority because every stakeholder had a different instinct about what "important" meant. The formula forces teams to answer four concrete questions about each backlog item.

What RICE optimizes for: The highest-scoring item reaches the most users, moves each user meaningfully, you're confident it works, and it doesn't take long to build. It's a measure of user value delivered per person-month of work.

RICE works best when:

- You have real data on how many users will be affected (analytics, support tickets, research)

- Your team prioritizes features by breadth of user impact

- You're building consumer-facing or broadly-used B2B software

- Team size is roughly 10–50 people with some discovery process

RICE struggles when: Your backlog is driven by regulatory deadlines, partner commitments, or strategic initiatives that affect few users but matter enormously to the business. A compliance feature that affects 5 enterprise clients but prevents a $2M contract loss will score low on RICE — and it shouldn't.

WSJF scoring — economic value per unit of time

WSJF (Weighted Shortest Job First) comes from the Scaled Agile Framework (SAFe), where it's used to sequence epics and features across multiple Agile Release Trains (ARTs). The formula answers a different question than RICE: not "which feature benefits the most users?" but "which job should we do first to minimize the economic cost of waiting?"

The numerator is Cost of Delay — broken into three components:

- Business Value: Relative value to the business or end-user if delivered now

- Time Criticality: Is there a deadline, a market window, or a dependency that makes delay costly?

- Risk Reduction / Opportunity Enablement: Does this item reduce a technical risk or unlock future work?

All four dimensions (including Job Size as the denominator) are scored on a relative Fibonacci scale — typically 1, 2, 3, 5, 8, 13, 20. You score items relative to each other rather than on an absolute scale. The team holds up a "reference item" and asks: is this feature twice as valuable? Half the effort? Scoring is done in a group session, often at PI Planning.

WSJF works best when:

- Your team runs SAFe with Program Increments (PI Planning)

- You have deadline-driven work where the cost of delay is concrete

- Multiple teams compete for shared capacity and need a transparent sequencing method

- You're prioritizing at the initiative or epic level, not individual user stories

WSJF struggles when: Your backlog is driven by user research rather than business deadlines. Time Criticality is often 1 or 2 for most features — which flattens the ranking and makes WSJF feel like a complicated version of "Business Value first."

For a detailed Jira setup guide including Fibonacci templates and PI Planning configuration, see WSJF in Jira: SAFe Prioritization Without Spreadsheets.

ICE scoring — fast directional ranking

ICE was popularized by Sean Ellis, founder of GrowthHackers, as a quick way for growth teams to triage large pools of experiment ideas. The simplicity is intentional — no per-user estimates, no Fibonacci workshop, no discovery data needed. You score Impact, Confidence, and Ease each 1–10, multiply them together, and rank.

What ICE optimizes for: Ideas with high impact, high confidence, and low effort (= high ease) bubble to the top. It rewards quick wins — experiments that are easy to run and likely to work.

ICE works best when:

- You have many small experiments or hypotheses to triage (10+ ideas per week)

- Discovery time is limited — you need a score in two minutes, not two days

- Your team is small and early-stage (under 10 people)

- Growth loops, A/B tests, marketing experiments, or MVP feature decisions

ICE's trade-off: Without a Reach dimension, ICE doesn't distinguish between an idea that helps 5 users and one that helps 5,000. For backlogs where user scale varies widely, this is a meaningful blind spot. Teams that start with ICE often graduate to RICE once their product has enough data to estimate reach reliably.

Side-by-side comparison

| Criterion | RICE | WSJF | ICE |

|---|---|---|---|

| Origin | Intercom (Sean McBride) | SAFe (Dean Leffingwell) | GrowthHackers (Sean Ellis) |

| Optimizes for | User value per effort | Economic value per time | Fast directional ranking |

| Data needed | User reach estimates | Relative team scoring | None — pure judgment |

| Scoring session | Individual or async | Group (PI Planning) | Individual, 2 min/issue |

| SAFe-compatible | Partial | Native | No |

| Handles deadlines | No | Yes (Time Criticality) | No |

| Handles user scale | Yes (Reach) | Indirect | No |

| Learning curve | Medium | High (Fibonacci, CoD) | Low |

| Best team size | 10–100 | 50+ (enterprise) | 1–20 |

| Score range | 0–∞ (typically 0–500) | 0.3–10 | 1–1,000 |

Decision tree: pick your framework

Answer the questions in order. Stop at the first match.

Can you use more than one?

Yes — and the best-run product organizations often do. A common split: use RICE at the feature level (individual backlog items, user story sizing) and WSJF at the initiative level (quarterly planning, epic sequencing across teams). ICE can serve as a fast pre-filter — quickly eliminating ideas that don't meet a minimum bar before investing the time in a full RICE score.

In Jira, all three frameworks coexist as separate custom fields. A single issue can have a RICE Score of 240, a WSJF Score of 6.5, and an ICE Score of 350 simultaneously. The Priority Scoring dashboard lets you switch the ranking view between frameworks with one click — useful when the same backlog is reviewed by both a product team (RICE-first) and an engineering leadership team (WSJF-first).

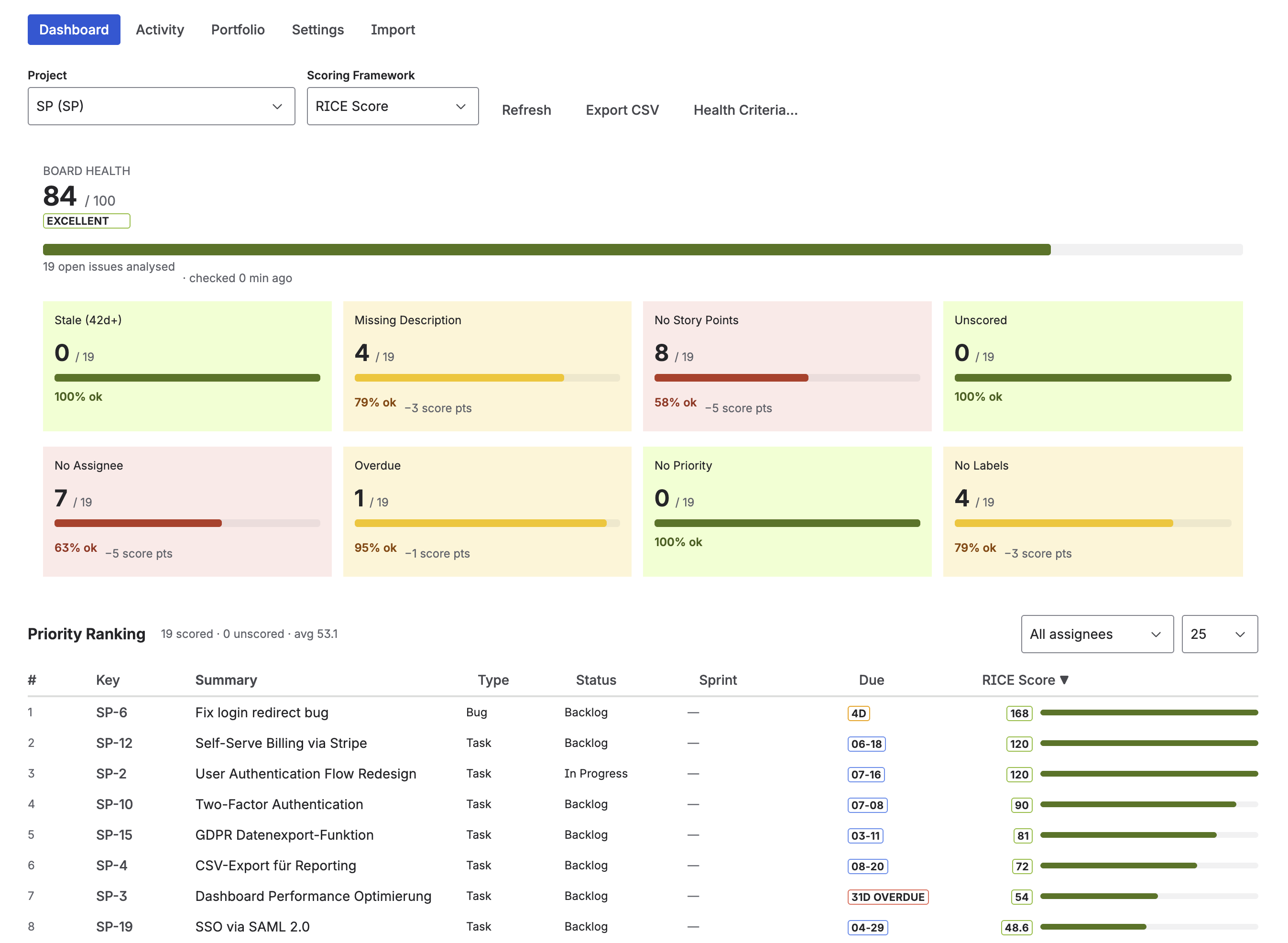

Switch between RICE, WSJF, and ICE ranking in the Priority Scoring dashboard — same backlog, different lens

Starting from scratch: a practical first week

If your team has never used structured prioritization, the biggest risk is overthinking the setup. Here's a simple sequence that works for most teams:

- Day 1: Install Priority Scoring for Jira. Add one framework field (ICE if you're small, RICE if mid-size) to your most active project screen.

- Day 2–3: Score the top 20 issues in your backlog. Don't aim for perfect — aim for consistent. Use the same definitions for Effort and Reach across all 20.

- Day 4: Run your next sprint planning using the ranked view. Notice where the score agrees with your instinct, and where it doesn't. The disagreements are the most valuable — they force a conversation.

- Week 2+: Calibrate thresholds. Score new issues as they arrive. Consider adding a second framework for cross-validation if useful.

Tip: Don't wait until your scoring system is "perfect" before using it. An imperfect RICE score that's 80% right is infinitely better than no score — because it forces the team to articulate and debate assumptions instead of deferring to the loudest voice in the room.

Frequently asked questions

What is the difference between RICE, WSJF, and ICE scoring?

RICE (Reach × Impact × Confidence ÷ Effort) measures user value per unit of effort. WSJF ((Business Value + Time Criticality + Risk Reduction) ÷ Job Size) measures economic value per unit of time and is the native SAFe framework. ICE (Impact × Confidence × Ease) is the fastest and simplest — no discovery data needed, scores in minutes. All three are available as native Jira fields in Priority Scoring for Jira.

Is RICE or WSJF better for Jira?

Neither is universally better — they optimize for different things. RICE is better for product teams focused on user impact. WSJF is better for enterprise teams in SAFe environments where cost of delay and time criticality matter. Many large organizations use RICE at the feature level and WSJF at the initiative level, running both in Jira simultaneously.

Which prioritization framework should I use in Jira?

Use WSJF if your team runs SAFe with PI Planning. Use RICE if you have reliable user reach data and prioritize by breadth of user impact. Use ICE if you need fast, lightweight scoring without detailed discovery. When in doubt, start with ICE and upgrade to RICE once you have user analytics to support Reach estimates.

Can I use multiple scoring frameworks in Jira at the same time?

Yes. Priority Scoring for Jira adds RICE, WSJF, and ICE as separate custom fields — a single issue can carry all three scores simultaneously. The dashboard ranking view switches between frameworks with one click, useful when the same backlog is reviewed by both product teams (RICE) and engineering leadership (WSJF).

What is the WSJF formula?

WSJF = Cost of Delay ÷ Job Size, where Cost of Delay = Business Value + Time Criticality + Risk Reduction/Opportunity Enablement. All dimensions are scored on a relative Fibonacci scale (1, 2, 3, 5, 8, 13, 20). Higher WSJF scores should be done first. Typical scores range from 0.3 to 10. See the full guide: WSJF in Jira.

Does Jira support RICE, WSJF, and ICE natively?

No. Jira does not include any scoring framework out of the box. You add them via a Marketplace app. Priority Scoring for Jira adds all three as native custom fields (sliders, live score calculation, ranked dashboard) without any scripting or custom formulas to maintain.

All three frameworks, one Jira app

Priority Scoring for Jira adds RICE, WSJF, and ICE as native custom fields. Switch between frameworks in the dashboard. Let the Rovo AI agent score issues automatically. Free trial included.

Try it free on Marketplace →